Human communication is full of unspoken assumptions. Common sense— the innate ability to understand these hidden meanings in a way that is agreed upon by most people—– allows us to communicate quickly and efficiently. These building blocks of shared understanding and experience provide a foundation for how humans interact with one another and the world.

Machines lack this shared experience and therefore can’t fully understand the context necessary to answer questions that seem obvious to humans. Because humans regularly rely

on such common sense, the lack of it in machines can block effective human-computer communication. This is a challenge that researchers have wrestled with for decades because it is so difficult to provide a reasonable substitute for our shared experience or even a way to store commonsense knowledge in a way that can be useful for an AI. That is why I am excited to share that the Salesforce Research team has made an important breakthrough in Commonsense Reasoning.

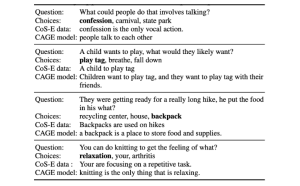

Commonsense Explanations Improve Model Accuracy

The Salesforce Research team introduces Commonsense Explanations (CoS-E), a new open sourced data set of human explanations and natural language sequences, and Commonsense Auto-Generated Explanations (CAGE), a novel model for training language models to answer multi-choice questions and provide explanations for each answer. Through this work we aim to give computers the ability to use commonsense knowledge to generate explanations for how they “think.”

These explanations not only provide more visibility into the “why” behind each answer and prediction, but also can improve performance. In fact, the CAGE model improves state-of-the-art accuracy 10 percent over prior methods on CommonsenseQA 1.0.

By opening up AI’s black box with these commonsense explanations, we made an important discovery in deep learning: transparency does not have to come at the cost of accuracy. Previous efforts in the field to make models more explainable results in limiting the full capability of AI and ultimately hinder performance. But now we see that leveraging explanations provides researchers and data scientists with new levels of insight into the inner workings of their models and improves accuracy.

Smarter Communication, Stronger Human and Machine Relationships

Voice assistants and bots are becoming a part of our everyday life. With this breakthrough we hope one day to transform the way we communicate with machines, making interactions more accurate and natural. For example, if you have an upcoming trip to New York planned and ask your voice assistant to make a dinner reservation, it could automatically connect the dots and recommend restaurants in NYC, versus your hometown, without you needing to share that explicit direction. Leveraging language models for commonsense reasoning will enable intelligent systems to be more adaptive and understand natural human language, opening up new possibilities for the way we work with and rely on computers.

Our team is headed to ACL 2019 (the annual meeting of the Association for Computational Linguistics) in Florence, Italy next month to share our research but you can learn more about the Commonsense Auto-Generated Explanation (CAGE) model and CoS-E data set in the team’s blog post. Congratulations to my co-authors, Nazneen Fatema Rajani, Bryan McCann, and Richard Socher.