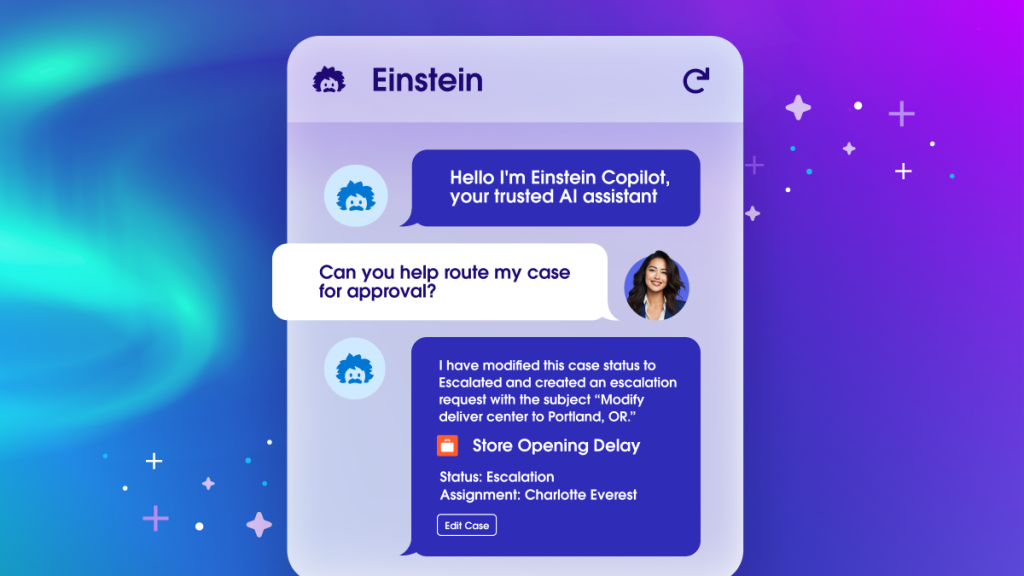

The recent rollout of Salesforce’s new conversational artificial intelligence (AI) assistant Einstein Copilot is a pivotal product launch that enhances our ways of working and saves valuable time. Like most of our customers, we were excited when the technology became available to pilot, and we were keen to use it to transform how our sellers sell, how our customer service agents find answers and how our marketers manage campaigns. In February, we started deploying Einstein Copilot within the Salesforce org, used by thousands of employees daily, and it’s already saving us countless hours of work. Here, we’ll share the real-world data and intel we gathered from this project in the hopes of helping you learn from our journey.

Laying the groundwork for deploying Einstein Copilot

As with any new corporate IT project, the first step is to identify the executive champions who’ll help you cut through red tape and remove obstacles and define clear goals. Getting buy-in from executives is important for any project’s success, particularly when it involves transformative technology like generative AI. Since Einstein Copilot is a pivotal product at Salesforce, our executives didn’t need much convincing. Still, their early support was crucial to our success, and getting leadership support for your project will reap similar rewards.

Once key stakeholders are on board, it’s critical to align on expectations and potential pitfalls in advance. When setting expectations for any deployment, it’s good to answer several key questions. Will the product be safe to use? Will it make users more productive? What are the most common reasons they’ll rely on the product, and how successfully will it deliver the desired results? For this project, we made sure to track a number of critical metrics such as daily active users, queries per session and user satisfaction.

Most importantly, we were clear with executives about the limitations of generative AI. It isn’t a magic silver bullet that can fix everything. It offers tremendous value and has tons of use cases, but it’s also prone to throwing an occasional curveball. We made sure everyone understood the likelihood of some unexpected outcomes in the course of the rollout.

The rollout

Despite the complexity of our internal Salesforce org, deploying Einstein Copilot took less than two hours. However, testing it against the vast amount of data in our Salesforce org took longer due to the thousands of objects, hundreds of thousands of fields, and billions of records in our Salesforce instance — as well as the hundreds of test queries we needed to evaluate.

This process revealed several areas for product improvement. For example, we saw that users needed recommended actions before engaging Einstein Copilot on account, contact, lead, opportunities and cases record pages to know the types of questions and actions their AI assistant can take. And to ensure we’re delivering extra value to our sales teams and help sellers be more efficient in using Einstein Copilot, we expanded the use cases beyond standard actions. We quickly realized that we needed to roll out a few custom actions, which are AI actions tailored to specific business needs, such as to give sellers a daily update of their accounts or to help them prioritize their tasks (more on this below). Fortunately, creating custom actions in Einstein Copilot is quick and intuitive.

In terms of user base, we started small with 100 sellers, this allowed us to have a well-controlled group for direct feedback, which proved essential during the rollout. Then, we progressively expanded the rollout to thousands of users.

So, what did we learn by deploying Einstein Copilot? Let’s take a look at our five biggest takeaways.

1. Start simple

Einstein Copilot offers customization options that let you tailor it to virtually any process or workflow. In our initial testing, we largely ignored these features. Instead, we relied on Copilot standard actions, a range of common tasks that are already built into the product. This way, we could start simple and let our users get a feel for a new way of interacting with customer relationship management (CRM) data. As our account executives began to use Einstein Copilot to summarize records, query data, and draft emails, we quickly saw the value of this approach. It’s simpler to set up and makes creating training and demos easier.

In partnership with our User Research and Insights team, we also developed a system to ask questions and get feedback to inform our next steps. Our initial user group had submitted some queries to Einstein Copilot that it couldn’t answer successfully. Reviewing those queries, along with user feedback, helped us identify what they expect from Einstein Copilot, and we were able to address those gaps by building custom actions. For example, we built one that lets users ask Einstein Copilot which tasks they should prioritize. Another lets them ask about any major changes to records they own.

Other custom actions included tasks like browsing websites to enrich account data and distilling multiple knowledge articles to generate answers about internal sales processes. Eventually, many of these custom actions will ship as standard actions, delivering even more productivity and personalization to our users and letting account executives focus on more impactful work.

Get the full view of Einstein Copilot

2. Learn a new way of testing

Since Einstein Copilot was brand new, we took extra precautions in our testing. Having a baseline to test and extend helps you assess if your configuration is set up in a way that more queries lead to accurate answers. For each Copilot standard action (like “draft an email”), we prepared a list of 30 potential user queries (like “draft an email to <contact>, tell them about our new products, and ask if they would like to meet”). We then manually checked to see if they were successfully executed. We aimed for an 80% success rate for all queries related to a supported use case. For the remaining 20%, we decided whether to document each query as a limitation or create a new custom action.

In total, we tested more than 250 queries. They became a foundational set of queries that we could check Einstein Copilot against every time we added a custom action or modified the configuration. Preparing a comprehensive list of queries is critical to assess if your implementation is at the level it needs to be. In general, we recommend that you create around 30 additional test queries for every custom action you assign or add.

3. Use Einstein Copilot for the right use case

We had a ton of ideas for Einstein Copilot use cases, but we made sure to ask key questions before implementing them. Would Einstein Copilot improve the user experience? Does the potential action fit within the product’s user interface? Will a conversational interface add value?

Not all AI experiences need to live within a conversational interface like Einstein Copilot, so these questions helped us decide which ideas would be covered with additional automation through Flow, which would be covered by a single call to the Einstein Trust Layer, and which ones truly belong within Einstein Copilot.

Remember, the goal is fewer clicks. If you’re replacing multiple clicks with a single Copilot Action, that’s probably a good use case. Think, also, about the value a conversational UI brings to the use case. The more conversational, the better fit for Einstein Copilot.

Keep in mind, large language models (LLMs), which Einstein Copilot is built on, are great at generating summaries and texts, which makes them invaluable tools for AI-related tasks. Even more, the advent of conversational UX opens up a realm of possibilities for seamless interaction across various contexts. For instance, it lets users effortlessly summarize a case while browsing an account, or ask about discount approvals when they’re looking at opportunities.

In addition to a desktop version, Einstein Copilot is available on mobile devices. The voice-to-text feature on Salesforce Mobile is a huge productivity saver since we can speak much faster than we can type. Also, Einstein Copilot goes deeper to help you create customized list views based on specific queries, such as identifying campaigns slated to begin the next month or listing opportunities worth over $100,000 that are expected to close in the same timeframe.

More and more, you’ll want to consider when it’s better to use Salesforce Automation features like Flows and Apex, or generative AI. The latter is better suited for dynamic workflows, but the real power comes from combining all of them to cover a use case. Indeed, nothing is preventing you from setting up a Copilot custom action to trigger an auto-launched Flow, and including a decision node that will then route to various custom prompt templates. This opens up a wealth of use cases.

4. Enablement is key

It’s important to make it explicit to users from the outset about which features they can expect to use right away, and what kind of requests they can ask for that we can build in the future. For example, in our testing, we saw users asking “what will be the weather in San Francisco tomorrow.” This wasn’t something Einstein Copilot could answer at first, but it’s something that could be built in as a custom action or assigned as a new standard action. Setting clear expectations about Einstein Copilot’s features and strengths while candidly communicating limitations has been key to improving satisfaction and success rates.

The backbone of our enablement strategy has been short demo videos that show how sellers can use Copilot Actions within their workflow to accomplish everyday tasks. We shared these shorts over a dedicated Slack channel with built-in workflows that capture feedback and offer additional documents like step-by-step instructions and sample queries. We also made sure our sellers were notified of new content through meetings and internal newsletters. Sharing the same information over multiple channels has been key.

5. Establish subject matter experts to provide feedback

As we learned, reviewing user queries — especially ones that remain unanswered — within Einstein Copilot can help us understand their expectations so we can iterate. Also, in Einstein Copilot, there is a feature that lets you track your conversations with customers in the event log so you can understand what queries people are asking at scale (make sure to comply with local regulations for data privacy).

The best way to gather feedback is by holding sessions with small user groups. Ask them to test Einstein Copilot and share their queries and responses. This helps you build a list of real queries that you can use for testing or brainstorming new custom actions. In addition, make sure you have feedback loops in place to collect queries on a biweekly basis from your users.

New Copilot standard actions are released monthly, so your implementation of Einstein Copilot will evolve fast. Capturing feedback on a continual basis is key to identify which actions to assign or build next for your Copilot. Look at your implementation as a continual improvement project rather than a “deploy and forget” one.

Getting the right feedback at the right time depends on strong collaboration between the business and IT. In our case, the Global Seller Experience team worked closely with the Business Technology team on the implementation, and with the Product team on the product itself. This gave us invaluable insight into what to improve next, and where.

A constant evolution

Implementing generative AI is no different from other software deployment best practices. Focus on high-priority and high-value use cases that are straightforward to execute and test, rather than solutions in search of a problem. Begin with simplicity, test with power users in a sandbox environment, quickly iterate based on feedback, and progressively scale the deployment.

Deploying Einstein Copilot has been an exciting journey filled with learning opportunities. We continue to work to evolve the platform and make it better, and we believe it will be a game-changer for Salesforce users. Stay tuned for updates and check out the various Einstein Copilot trails on Trailhead to ramp up your knowledge.