Early last year, every designer was asking the exact same question: Will this actually work? We tested the prompts, pushed the boundaries, and treated AI as an individual sport. It was one designer and one tool, seeking a singular moment of magic. And the answer we found was a resounding yes; the technology worked remarkably well. But a single designer generating a brilliant mockup is just an anecdote. A global team delivering a seamless, trusted customer journey is a system.

Here is exactly how our internal team updated our processes to solve the enterprise alignment problem, moving away from isolated experiments to orchestrate a unified, AI-empowered design standard.

Table of contents

- The 2025 Pivot: From Individual Heroics to Systems

- The Tool Stack: Evaluating the Top 3 Winners

- The AI-PM Framework: Why Experience is the Ultimate Feature

- Productivity & Quality: The Hidden Cost of Speed

- The Design Team POV: Navigating “AI Slop”

- Future Predictions

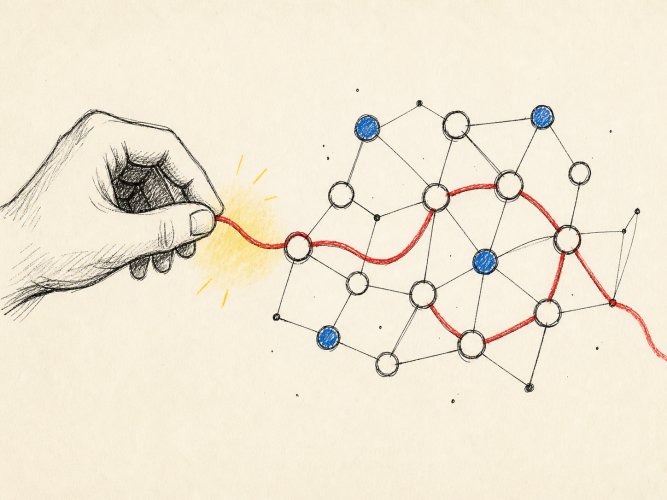

1. The 2025 Pivot: From “Will It Work?” to “How Do We Work?”

After the initial wave of exploration, the focus shifted.

We stopped asking what the tools could do, and started asking how our work should be done.

The real challenge was no longer generating outputs, but creating consistency—across teams, touch points, and decisions. Tools alone couldn’t solve that. What mattered was how they were embedded into personal workflow.

So the focus is shifting from optimizing individual tool usage and toward designing standardized processes.

2. The Tool Stack: Context is King

We weren’t looking for tools that could generate things; we were looking for tools that could help us think better, remember better, move faster, and stay organized without sacrificing quality. Based on an extensive review of what actually changed our workflows, three tools stood out.

These top performers all share one critical trait: they work best when grounded in your actual documents, projects, and conversations.

| Tool | Core Function | Why it Works for Enterprise Teams |

| NotebookLM | Your Project’s Source of Truth | Works exclusively with provided documents, meaning it does not hallucinate. It acts as the “left brain”—rigorous, reliable, and grounded in fact. |

| Gemini Gems | Your Creative Co-Pilot | Custom AI assistants built for specific needs with defined personas and context. Acts as the generative “right brain” to challenge assumptions and push explorations. |

| Slack AI | Internal Search Engine | Brings intelligent search, summarization, and question-answering to the existing workspace. Searches across channels and documents to surface answers in seconds. |

Our research uncovered that a creative AI assistant attached directly to a NotebookLM notebook generates ideas grounded in actual research. For complex projects, this combination is a powerful workflow that makes the work better.

3. The AI-PM Framework

Relying purely on raw AI output is like eating a fast-food burger — fast, but ultimately lacking substance. AI has access to enormous amounts of information, but what it cannot do is bring wisdom to that information. AI can tell you what is generally true, but an experienced practitioner can tell you what is true right now, in this context, for these people.

The value of an expert is in the Tinkerbell dust. AI gives you the baseline, but expert judgment and experience applied on top transforms it into something a client trusts. To formalize this, we developed the AI-PM Methodology:

- Tier 1 (P0) — Setting the Vision: Stop thinking of yourself as the person doing the work alongside AI, and start thinking of yourself as the Product Manager overseeing it. You drive the vision, set the requirements, and define what “good” looks like.

- Tier 2 (P1) — Input Sanitization: Clean inputs are mandatory. Messy transcripts and unstructured notes degrade everything downstream. Garbage in, garbage out.

- Tier 3 (P2) — Output Judgment: AI surfaces; humans judge. AI treats all input data equally and will sometimes surface a passing, offhand comment from an interview as a key persona insight. Someone must review, filter, and verify after it.

4. Productivity & Quality: The Hidden Cost of Speed

AI undeniably makes teams faster at writing user stories, generating personas, and mapping flows. A solo designer can now produce work that previously required a full team, which raises the baseline floor.

However, speed is only half the story. What productivity headlines miss is that AI creates new work even as it eliminates old work. Output that looks polished can still lack fundamentals, shifting time from creation to verification. That preparation overhead and triple-checking rarely get counted in time-saving estimates. Teams get the most value when they treat AI as a collaborator needing management, not a machine delivering finished work.

5. The Design Team POV: AI creates both leverage and noise

When looking at how enterprise design teams are actually operating, the sentiment is cautious optimism rather than blind enthusiasm. Professionals are experiencing a core tension: excitement for the use cases, but wariness of unintended consequences.

The Wins:

- Reducing repetitive manual work and administrative overhead

- Synthesizing large datasets and research transcripts faster

- Accelerating early-stage wireframing and content drafts

- Leveraging AI results in ~15-20% time savings on project work.

The Worries:

- Inconsistent accuracy creates a heavy validation burden — fixing an AI’s contextual errors can take longer than starting from scratch

- A relentless push for speed is producing shallow outputs and “AI slop”

- Over-reliance on AI-generated content risks the appearance of productivity without the substance — teams move fast but deliver less value than the output volume suggests

Professionals want clear guardrails to navigate this landscape. They require models grounded in domain-specific, highly reliable data to reduce hallucinations. Ultimately, teams want to leverage AI to elevate the baseline quality of their work and improve their quality of life, rather than just accelerating output.

Future Predictions

The most important changes are rarely the ones happening on your screen. They are the ones happening to the people sitting in front of it.

The Hybrid Human and the Question Nobody Is Asking

The next wave of workplace disruption will not be a tool. It will be a role. Hybrid positions are emerging everywhere: designers who write, strategists who prototype, researchers who ship. The assumption is that because AI can fill the gaps, one person can now cover the entire board. But the more urgent question is not can we do it all. It is should we?

Expertise is not just efficiency. A designer who has spent a decade understanding what looks right brings something no prompt can replicate. The risk of the hybrid era is not that roles will blend. It is that we flatten the peaks of deep expertise in the pursuit of versatile coverage. The strongest teams will be the ones that resist the urge to collapse roles, and instead use AI to let experts go deeper, not wider.

There is also a human cost hiding in optimism. Blurring roles, accelerating demands, and the disappearance of linear career paths are not just organizational challenges. They are sources of real, compounding stress. There is no longer a clear ladder. There is a terrain that keeps shifting. That has consequences teams are only beginning to measure.

The New Default: AI Fluency Is Not a Differentiator Anymore

Prompting, AI literacy, even vibe coding are rapidly becoming table stakes, not talking points. Within the next few years, listing AI skills on a résumé will carry roughly the same weight as listing proficiency in Microsoft Office. It will be assumed.

What will not be assumed, and cannot be automated, is the commitment to learning that never ends. One certification, one course, one sprint of upskilling will not be enough. The half-life of AI knowledge is shortening faster than training programs can update. Every practitioner, at every level, will need to become a permanent student. Not as an aspiration, but as a job requirement.

The New Productivity Metric: Outcome Over Output

The old measure of productivity — tasks completed per unit of time — is already becoming obsolete. A designer who spends twenty hours building an agent that solves nothing has produced less value than one who spends a hundred hours building something that transforms a business workflow. Speed was always a proxy. The real currency was always impact.

This shift demands a different kind of accountability. Teams will need to evaluate not how much was made, but how much it mattered. That is a harder question to answer and a more honest one.

From Doers to Directors: The Agentic Shift

As agentic AI matures — systems capable of operating with greater autonomy to research, draft, test, and iterate on our behalf with minimal moment-to-moment human input — the human role in that loop will quietly but fundamentally change. We are moving from execution to supervision. From building to coordinating. From doing to approving.

This transition will also drive demand for a new category of tools: AI that is less about creative surprise and more about reliable precision. Products like NotebookLM succeed not because they are imaginative, but because they are trustworthy. The next frontier is not more powerful generation. It is more predictable output. Pre-trained agents calibrated to your brand, your voice, your domain. For most enterprise work, hallucination is not a feature. Accuracy is.

The tools that win the next era will be the ones that make AI feel less like a gamble and more like a process.