Core Resources

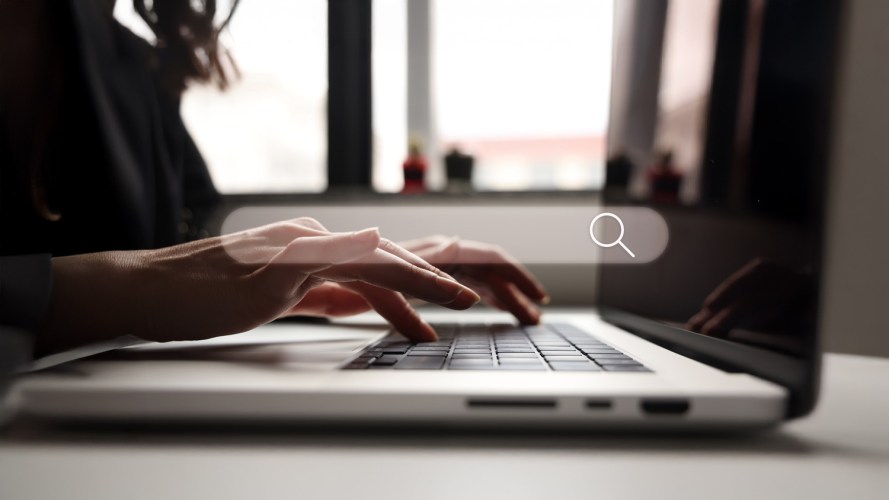

Start Your AI Journey

How can AI change the way you do business? Explore the fundamentals of this fast-moving technology.

AI Research

Salesforce’s AI Research team is all about diving deep, thinking ahead, and making things happen fast. Here’s what they’re up to.

Dig Deeper into AI

Elevate your AI expertise with in-depth articles that explore the complexities of the technology and its real-world applications.

Trusted AI

What are the ethical considerations around AI and how can companies use it responsibly? Here’s why trust, privacy, and safety matter more than ever.

Inspiring events. In-person and streaming.

Catch all the excitement and inspiration from our events, while upping your tech know-how too.

Upcoming Webinars

On Demand

On Demand

On Demand

Explore AI Topics

Discover the resources you need to grow your knowledge and your career.

Learn valuable skills with Trailhead.

Follow guided learning paths and get hands-on to learn AI, data, and Salesforce skills from anywhere.

Generative AI for Organizations

Discover how generative AI can boost productivity throughout your organization.

Data Quality

Discover strategies for assessing and improving the quality of your data in Salesforce.