Why Technical Accuracy is the Wrong Metric for Agent Success

Close the gap between system logs and lived experience with a quality framework built for human value.

Imagine you’re looking for a specific bakery in a maze of narrow city streets. Your maps app is technically flawless, giving real-time directions and rerouting perfectly. But you’re still lost, squinting at your phone and second-guessing every corner. Your cognitive load is through the roof. Eventually, you close the app and ask a local for help.

This is workflow breakage in action. The system was correct, but it failed to meet you where you were. It’s a reminder that an agent can be right and still fail the user.

We see this same friction in our technical logs. Accuracy is trending at 92%. Task completion is at 89%. Engagement is a steady 94%. By every technical metric, the agent is a success. But then, usage drops. Users return to their old, manual workflows. When asked why, they don’t say the agent was wrong. They say, “It just doesn’t work for me.”

This is the silent adoption killer: the gap between system success and user success.

To build agents people actually use, we have to stop asking if the agent is accurate and start asking if it’s worth using. This shift requires a new kind of infrastructure that treats quality as a rigorous release gate centered on human outcomes.

Here’s what we’ll cover:

Spot the gap between system metrics and lived experience

Diagnose failures using the three severity tiers

Evaluate quality at scale with heuristics

Turn quality into a release gate, not an afterthought

Putting these principles into practice

Spot the gap between system metrics and lived experience

The metrics problem in AI is subtle. Accuracy, task completion, and engagement are the standard markers of a healthy system. But telemetry and lived experience often tell two different stories. A log might show a successfully completed task with no errors, while the user feels they have wasted their time.

System success and user success aren’t the same. When we analyzed transcripts of interactions that appeared successful in the logs, we found that nearly one in seven actually failed the user. These are the phantom successes. While the data flags a win, the user experiences a loss and quietly abandons the agent and reverts to old workflows because the interaction was too slow, too wordy, or too difficult to verify.

To find these gaps, you have to look beyond the numbers and identify where the agent is technically right and practically wrong. This happens when the system understands the task but fails to understand the human context, and closing this gap starts with a simple shift in perspective. Instead of asking if the agent is accurate, ask if it provides enough value to replace the user’s current way of working.

Diagnose failures using the three severity tiers

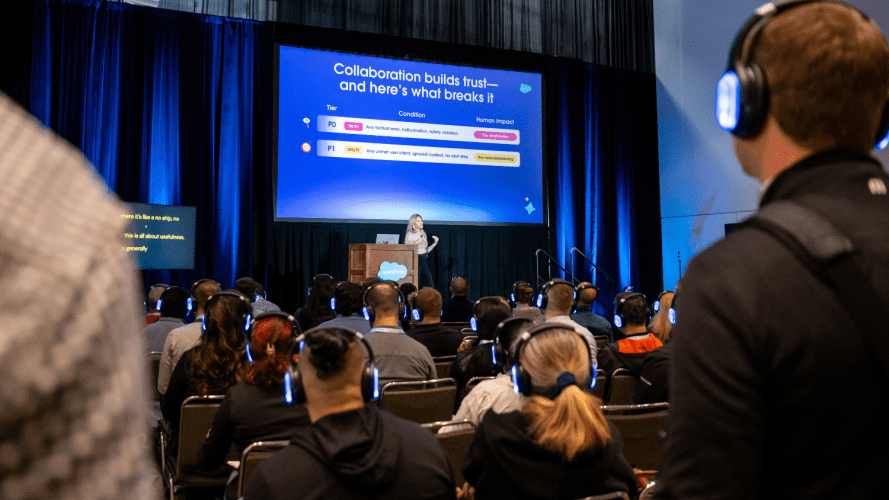

When a user says an agent doesn’t work, that feedback is often too broad to be actionable. To fix the experience, you have to go deeper into the root cause. At Salesforce, we use a severity tiering system to categorize failures based on how they impact the user’s ability to move forward. This allows us to prioritize the most damaging issues first while keeping an eye on the friction that causes long-term abandonment.

P0: Trust-breaking failures

These are critical errors where the agent provides a wrong answer or entirely fails to respond. A common example is a legal agent giving a confident but outdated answer about contract terms, and because the legal risk increases with every single wrong answer, the user loses faith in the entire system. P0 failures are loud and must be addressed immediately to maintain the security and trust foundation of the product.

P1: Intent failures

In these cases, the agent might be technically accurate, but it isn’t useful enough to help the user complete their task. This happens when an agent ignores inputs, misunderstands intent, or fails to clarify a vague request. These failures block adoption because they force the user to do the mental heavy lifting that the AI was supposed to handle.

P2: Friction and overhead

P2 failures are the most subtle because the agent provides the correct information but delivers it in a way that creates friction. The answer is accurate, but the format or timing is so inconvenient that the user still has to exert extra effort to make the data useful. This looks like returning a long, unformatted block of text on a mobile device or providing a link instead of a summary. While these don’t break the system, they do increase cognitive load and reduce productivity. Over time, these small frustrations accumulate and lead users back to their old workflows.

Evaluate quality at scale with heuristics

To define what a high-quality experience looks like, we use heuristics. In design, a heuristic is a practical rule of thumb used to evaluate the usability of an interface. Think of these as experience checks that help design and engineering teams catch failures before they reach the user. By evaluating transcripts against these 11 benchmarks, you can pinpoint exactly why an interaction feels off, even when the system logs claim success.

These heuristics are grouped by the severity tiers they typically trigger:

P0: Trust-breaking checks

- Factual & Reliable: Are the agent’s answers grounded in actual context or data?

P1: Understanding checks

- Effective: Does the agent actually complete the task, not just respond correctly?

- Memory & UI Context: Does the agent use information that’s already available in the chat or UI?

- Teachable: Does the agent adjust when corrected or redirected?

- Responsive: Does the agent ask for clarification when the request is ambiguous?

- Trusted: Does the agent stay within safe and appropriate limits?

- Decisive: Does the agent give a clear next step, rather than just explaining and leaving the user hanging?

P2: Friction and overhead checks

- Consistent: Does the agent maintain the same tone, terms, and behavior across turns?

- Helpful: Does the agent’s response actually move the user toward completing their task?

- Conversational: Does the agent use language that feels natural and easy to work with?

- Approachable: Does interacting with the agent feel effortless?

Using these heuristics manually for every conversation is impossible at scale. To operationalize this, we’ve moved toward an “LLM-as-judge” pipeline for evaluations. By running conversations against it, we can reduce the time it takes to review a session from 30 minutes to just a few seconds. This allows us to maintain a high bar for quality across thousands of interactions without removing the human designer or builder from the strategic loop.

Turn quality into a release gate, not an afterthought

Defining quality is only half the battle. To truly protect the user experience, these heuristics must become part of your infrastructure. At Salesforce, we treat severity tiers as release gates:

- P0 blocks deployment. This is the dealbreaker. If we see a factual error or safety violation at any stage, we stop. There is no scaling through a hallucination and if the agent is live, we pull it.

- P1 blocks scale. This is the misunderstanding. If a pilot reveals that the agent is leaving users hanging, we do not open the doors to more people. We refuse to scale a misunderstanding.

- P2 blocks adoption. This is the overhead. Users rarely complain about friction, they simply stop coming back. This is where adoption dies quietly.

P0 failures are loud and easy to find, but P1 and P2 failures are the ghost failures that cause a product to fail slowly. By encoding these as gates, we ensure we are shipping an agent users will actually keep using, rather than just accurate code.

By making quality a gate, you ensure that the responsibility for the user experience is shared across design, engineering, and product management. This moves the conversation beyond whether the code works to whether the experience is ready for a human.

Operationalizing this process goes beyond checking boxes. It builds a system that values time and trust. While automation tools like LLM-based evaluations help us maintain this high bar at scale, the ultimate judgment remains human. Instead of removing the designer or builder, we provide them with better data to make strategic decisions on how to optimize the experience to help humans reach an outcome.

Putting these principles into practice

If you’re ready to apply these principles to your own agentic workflows, start small. You can begin closing the gap between your logs and the lived experience of your users without a complex pipeline.

Take these three steps today:

- Review 50 “successful” sessions. Look past the green checkmarks in your logs to read the actual transcripts.

- Tag the friction. Use the 11 heuristics to identify where the agent understood the task but failed the human.

- Ask the key question. Would you choose this AI workflow over the way you do the task today?

If the answer is anything less than a clear “yes,” you’ve found your starting point for improvement. By shifting your focus from technical accuracy to human value, you move beyond building tools that work and begin building agents that people truly trust.